GPU Instancer:BestPractices

About | Crowd Animations | Features | Getting Started | Terminology | Best Practices | API Documentation | F.A.Q.

GPU Instancer provides an out of the box solution for improving performance and it comes with a very easy to use interface to get started. Although GPUI internally makes use of quite complicated optimization techniques, it is designed to make it very easy to use these techniques in your project without going through their extensive learning curves (or development times). However, by following certain rules of thumb, you can use these techniques as intended and get the most out of GPUI.

In this page, you will find best practices for using GPUI that will help you get better performance.

Contents

Instance Counts

The More Instance Counts, the Better

The rule of thumb to follow here is to have only the prefabs that have high instance counts rendering with GPUI, while minimizing the amount of the defined GPUI prototypes on the managers as much as possible.

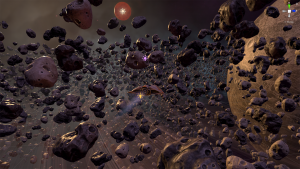

When using the Prefab Manager in your scene, the best practice is to add the distinctively repeating prefabs in the scene to the manager as prototypes. For example, in the included PrefabInstancingDemo scene, you can see that there are three asteroid prototypes. When the scene generates these asteroids around the planet, the resulting instance counts are around 16.5k for each. GPUI will draw each of these prototypes in a single draw call - however, since the asteroid prefabs are using LOD Groups on them with three LOD levels on each, the result is 9 draw calls (3 x 3) for all of these asteroids. Please also note that the AsteroidHazeQuad prefab (which is basically a quad with a custom shader on it; it makes the scene look dynamic and foggy) is also added to the manager as a prototype even though it has 28 instances only. Thus, the idea is not like a lower limit on the instance counts, but that the asteroids (with a lot more instance counts than the haze quads) will gain a lot more from instancing than the haze quads. Notice that the planet and the sun are not defined as prototypes since there is only one of each in the scene and therefore they would not gain anything from instancing.

Thus, using GPUI for prototypes with very low instance counts is not recommended. GPUI uses a single draw call for every mesh/material combination, and does all culling operations in the GPU. While these operations are very fast and cost efficient, it is unnecessary to use GPU resources if the instance counts are too low and the performance gain from instancing them will not be noticeable.

Furthermore, since prefabs with low instance counts will not gain a noticeable performance boost from GPU Instancing, it is usually better to let Unity handle their rendering. Unity uses draw call batching techniques on the background (such as dynamic batching). These techniques depend on the CPU to run and tax their operations on the CPU memory. When there are many instances of the same prefabs, these operations turn out to be too costly and the reduction in batch counts dwarf in comparison to GPU Instancing. This is where GPUI shines the most. But where the instance counts are noticeably low, the cost on the CPU when using these techniques becomes trivial - yet they will still reduce batch counts and thus draw calls. While using GPU instancing, on the other hand, since meshes are not combined, every mesh/material combination will always be one draw call. Please also note that there is no magic number here to use as a minimum instance count since it depends so much on the poly-counts of the meshes, how they are distributed in the scene etc.

In short, GPU Instancing will help you the most when there are many instances of the same prototype, and it is not recommended to add prototypes with low instance counts. The only exception to this is where the instance counts get to extreme numbers such as millions. See the Detail Instancing section below for more on this.

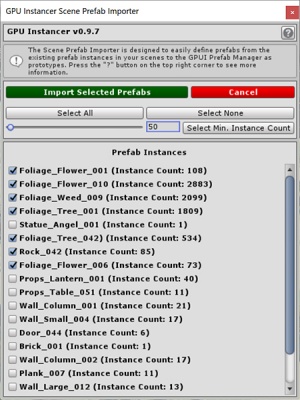

Scene Prefab Importer

GPUI comes with a Scene Prefab Importer tool that can help you with easily managing this. You can access this from:

Tools -> GPU Instancer -> Show Scene Prefab Importer

- The Scene Prefab Importer tool will show you all the GameObjects in the open scene that are instances of a Prefab - and their instance counts in the scene.

- You can use the Select Min. Instance Count slider and button to select prefabs with a given minimum amount.

- You can then Import Selected Prefabs and GPUI will create a Prefab Manager in your scene with selected prefabs as prototypes.

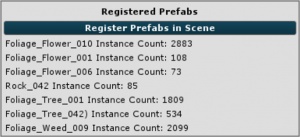

Registered Prefabs in the Prefab Manager

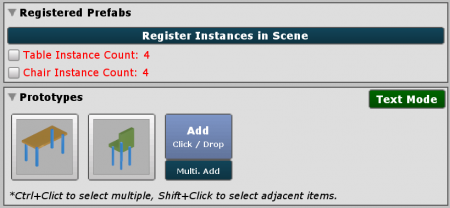

When your Prefabs are defined in the Prefab Manager, the manager will show you the instance counts that are registered on it.

- If you add any new prefab instances in your scene (or remove any), the instance counts must be registered again in the manager.

- You can simply click the Register Prefabs in Scene button to update the instance counts in the manager.

- If you add or remove instances at runtime, you can either manually register them from the GPU Instancer API, or if you setup your prototypes to do this, GPUI will automatically handle this for you.

A Single Container Prefab vs Many prefabs

One important thing to notice about instance counts is related to the structure of the prefabs. If you have a prefab that hosts many GameObjects that you created for organizational reasons, Unity does not identify the GameObjects that are actually the same under it as instances of the same object. What this means is that when you add such a prefab to the Prefab Manager, GPUI has no way of knowing that the some of the GameObjects under this prefab can actually be instanced in the same draw call. This results in creating a draw call for each mesh/material combination under this prefab and therefore beats the purpose of GPU Instancing.

To exemplify this, think of a prefab which represents a building. Maybe you have windows under this building prefab as children which use the same mesh and materials. Maybe you also have smaller building blocks, doors, tables, etc. that share the same mesh and materials. If you have this huge prefab, however, from the perspective of Unity (and therefore GPUI), you effectively have a prefab with as many meshes and materials in it that as the total sum of meshes and materials it hosts. So if you add this prefab to GPUI as a prototype, you will also see as many draw calls.

Instead, the better way of having this building in your scene (at least as far as GPUI is concerned) is to have a host building GameObject - where all its child windows, doors, tables, etc. are instances of their own prefabs. When you add these prefabs as prototypes to GPUI, you will see that all the windows, doors, etc. will share a single draw call instead.

Please note, however, that this issue is not the case starting with the Nested Prefabs support in Unity 2018.3 and later. Using Nested Prefabs, you can have all your windows, doors, etc. as prefabs and still save the building as a container prefab. GPUI supports Nested Prefabs, so if you create a prototype from the building prefab, the Prefab Manager will recognize the children as prototypes as well.

Nevertheless, this is one of the most important issues concerning prototypes and instance counts; and it is usually one of the biggest pitfalls while using GPU Instancer.

Nested Prefabs

Using Nested Prefabs (introduced in Unity 2018.3) are a great way to organize your scenes. When using GPUI, furthermore, the Nested Prefabs support could be used to define prototypes that are shared between different prefabs. This would be an ideal strategy for scenes that contain modular prefabs: If you have lots of different modules that make up your main game objects, defining these modules as prototypes to GPUI would make GPUI render those modules with a single draw call for each module. Thus with nested prefabs, you can have your main (container) game objects as prefabs that contain these prefab modules and use these to design your scenes.

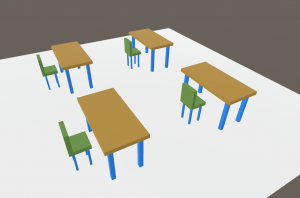

Let's consider an example: We have a table, and a chair. Both the table and chair have legs, and we use the same mesh/material combination for these legs:

Without Nested Prefabs

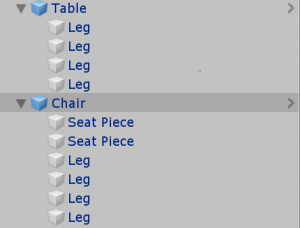

Let's first consider what it would be like without using Nested Prefabs. We would have only two prefabs for the Table and the Chair (the legs won't be nested as prefabs under these, so we don't have a leg prefab):

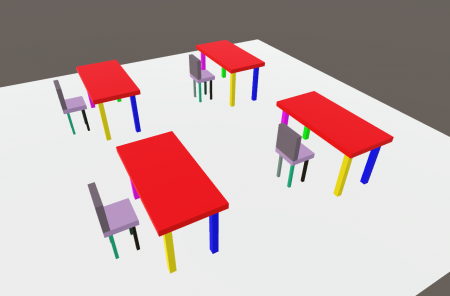

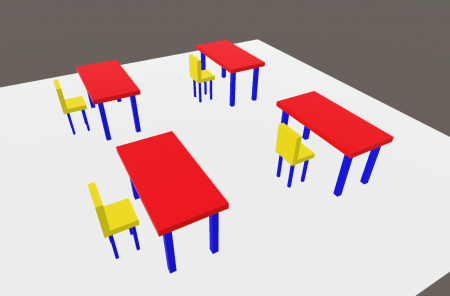

Notice in this screenshot that Table and Chair are showing blue (as prefabs) and legs are grey (they are not prefabs). Now in this scenario, we would define the Table prefab as a GPUI prototype to the manager, and also the Chair prefab as another. GPUI would then analyse its mesh renderers, and find that Table contains 5 renderers (1 table top and 4 legs), Chair contains 6 renderers (2 seat objects and 4 legs). Since GPUI issues a draw call for each renderer of a prototype, this would mean that we have 5 draw calls for all the tables and 6 draw calls for all the chairs in the scene; a total of 11 draw calls.

These would be 11 draw calls whether it is 1 table and 1 chair, or 10k tables and chairs. This is good, but it could be better if we could tell GPUI that all the legs in the scene use the same mesh/material combination so it could render all of them in a single draw call. This is where Nested Prefabs come in handy.

With Nested Prefabs

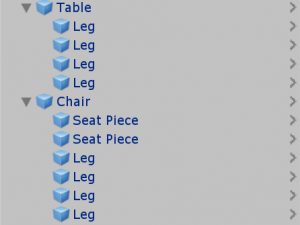

Now let's consider what it would be like with Nested Prefabs. We have the ability to use prefabs inside prefabs. We can create prefabs of our repeating modules (such as the legs and seat pieces) and nest these under the main prefabs (such as the table and chair). Thus we can have one Leg prefab, a Table prefab (that also contains the Leg prefab) and a Chair prefab:

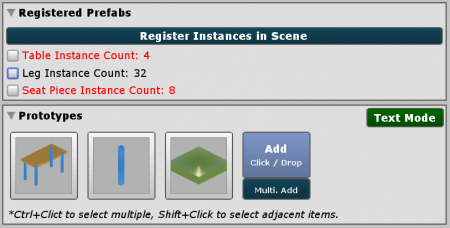

Notice that the Table and Chair are showing blue as before (they are prefabs) but the Legs are also showing blue this time (they are prefabs, too). Now in this scenario, we have the possibility to define the Leg prefab as a separate GPUI prototype to the manager. This will help us notify GPUI that it can draw the legs in a single draw call. We also add the Table prefab as a prototype, and the Seat Piece as a prototype too. Now when GPUI will analyse these prototypes, it will consider all the legs in the scene as instances of the Leg prototype and ignore the legs in the Table prototype.

Notice we did not define the Chair as a prototype. This is because we defined the Legs as a prototype, the Seat Pieces as another prototype and there are no other renderers left in the Chair prefab for GPUI to consider as yet another prototype. If we tried adding the Chair having already added Leg and Seat Piece as prototypes, GPUI would throw an error saying it could not find any Mesh Renderers inside this prefab (since it will be ignoring all the children defined as other prototypes).

Having defined our prototypes like this, we now have 1 draw call for Table instances (for the table top), 1 draw call for all the Legs and 1 draw call for the Seat Pieces in the scene: a total amount of 3 draw calls. This is a lot better than the 11 draw calls we had when not using Nested Prefabs above.

In the screenshots below, you can see a comparison between the draw calls when using nested prefabs and when not using them. Each color is a draw call in this picture.

Using Occlusion Culling

The rule of thumb to follow here is that you should turn occlusion culling off if your scenes do not have a sensible amount of occluders or if the mesh geometry is too little in tri-counts.

The occlusion culling solution that GPUI implements is extremely easy to use: you literally don't have to do anything to use this feature. You do not need to bake any maps, to add additional scripts nor use Layers. Furthermore since it works in the GPU, it also is extremely fast. As such, you might be tempted to use this feature even where you probably won't need it.

However, please note that the Hi-Z occlusion culling solution introduces additional operations in the compute shaders. Although GPUI is optimized to handle these operations efficiently and fast, it would still create unnecessary overhang in scenes where the game world is setup such that there is no gain from occlusion culling. A good example of this would be strategy games with top-down cameras where almost everything is always visible and there are no obvious occluders.

GPUI makes it possible to use extreme numbers of objects in your scenes. And in higher numbers, the cost of testing for occlusion can be higher than desired in the GPU if the scene is not designed in such a way that this cost of testing is compensated by the average amount of geometry that is culled. In these scenarios, you will get a better average in performance boost out of GPUI without occlusion culling than having it on.

Also, there may be cases where the instanced geometry is so low in tri-counts that you could be getting more out of instancing them anyway rather than testing for occlusion culling. Typical case scenarios for this would be low-poly style or mobile games where instance counts are not extreme. If the graphics card can render the excess geometry faster than it would calculate whether it should cull them, then it would mean that GPU based occlusion culling is doing more harm there than good. The best way to test for this is experimenting by running your scene with and without occlusion culling on and comparing the results.

In short, it is recommended to design your scenes in such a way that there will be obvious culling that GPUI's occlusion culling will take advantage of. Examples of this are elevations that slightly block your view in a terrain, walls/buildings that a player walks in front, etc. Or, if your game is so that there will never be enough occluders (e.g. a top-down strategy) - or if your prototypes' mesh geometry is too low so that culling will not be worth the testing - than it is recommended to turn occlusion culling off.

Using GPUI for Unity Terrain Details

The rule of thumb here is to aim to balance the visual quality you introduce to the terrain details with the performance you can get out of the GPUI Detail Manager. The Detail Manager will not always be faster than the default Unity terrain.

Quality Settings

When using the Detail Manager, one thing to keep in mind is that most of the options in the Detail Manager interface serve the purpose of increasing visual quality over that of the default Unity detail shader. You also don't have to do any extra work for this visual upgrade - GPUI simply takes your Unity terrain and makes it better looking just by adding the Detail Manager. The default settings on the manager introduce shadows for your details and turns the texture prototypes into cross-quads. The foliage shader also introduces further quality upgrades by adding ambient occlusion, color gradienting, wind wave tints, and much more.

GPUI uses instancing techniques for backing this up performance-wise, and the result is always a stable FPS with no spikes. However, in some cases, the FPS would result in a number that is lower than the Unity default especially if you are using features that don't exist in the Unity terrain details. The expected result when increasing visual quality in the Detail Manager, therefore, is not that it would always be faster than the Unity terrain, but that it is fast while still using these features.

The most impacting of the visual upgrades that the Detail Manager introduces are shadows, and it gets heavier with the amount of Shadow Cascades that are defined on your quality settings. One thing that can be done to improve performance while using shadows is that, if you have multiple detail prototypes on the manager, you can selectively use shadows on them. Also, if you are using a third party Screen Space Ambient Occlusion effect, this usually has the same effect with having shadows on your grass so you can turn the shadows off for all grass prototypes to get a similar looking terrain with better performance.

Also, depending on your settings, the cross-quadding option effectively doubles, triples or quadruples the amount of geometry you have for texture details in comparison with the Unity details. This doesn't always result in the expected visual improvement, however. If your grass is distributed on the terrain densely, you may not get too much difference quality-wise while using two quads or four for them.

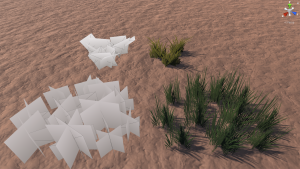

Prefab Details Instead of Textures

On this point, it is also worth noting that the Detail Manager supports using prefabs as detail prototypes as well. If you use tufts of grass as prefabs (as shown in the picture on the left) you will be saving a lot on the instance counts. Although it is true that GPUI works better with higher instance counts, when using grass details on the terrain, the instance counts can get way to extreme - such as 1 or 2 million instances. At such extreme numbers, the visibility operations on the compute shaders can start to become taxing on the GPU. By using a tuft prefab as such, you can cut the instance counts down a lot. For example if you have a tuft consisting of 10 separate cross-quads, you will achieve the same effect with 100k (1.000.000 / 10) instances as opposed to where you did it with automated cross-quads from default texture terrain details with 1.000.000 instances. The only difference here is the way you distribute your grass, yet there will be better performance gain. It is only because GPUI aims to work on your terrain by staying loyal to how it already looks, it does not do this automatically (i.e. convert your texture details to combined cross quads (tufts) automatically).

Please have it in mind that you can use the GPUI foliage shader on your prefabs as well.

Occlusion Culling with Terrain Elevations

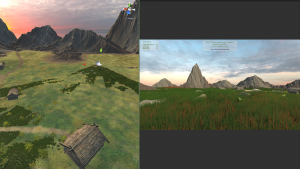

GPUI also offers culling solutions that help increase the FPS, but they require you to design your scenes with having them in mind. For example the occlusion culling feature will help you get better results in terrains where you have hills that block your view. Not necessarily giant hills, but slight elevations to hide some instances as in the included DetailInstancingDemo scene.

In scenes with wide plains where nothing is culled, the occlusion culling system effectively becomes obsolete - if you wish to have scenes like this, it is recommended to cut back on mainly the shadows and the cross-quadding options and the maximum detail distance to see better performance.

However, as you can see in the image to the left, slight terrain elevations help immensely with detail culling in combination with the occlusion culling feature.

Also, please have in mind that GPUI lightens the CPU load by moving all rendering related things to the GPU. If you test only the terrain with a GPUI Detail Manager, you are always testing the GPU since there are no scripts running at all. In a real game scenario, you will be using your CPU for other things, and GPUI's instancing solution would be more apparent in the FPS.

In short, using the Detail Manager effectively requires a bit of thought in the design process. You need to optimize the quality settings on the manager according to the terrain you have. The final FPS would always scale for the better directly with a better graphics card since it's all done in the GPU, but since you're adding features that don't exist in the Unity Terrain, it would not always be faster then the default terrain. On this point, the recommended action to take would be to create different quality levels in your game and use different settings for these. For an example of this usage, you can take a look at the included DetailInstancingDemo scene.

Using a Multiple Camera Setup

The best practice for a multiple camera setup is to use multiple managers that define the same prototypes and enable the option Use Selected Camera Only on all.

If your scene features a split screen setup, or if you render part of your scene to another camera to overlay it on the screen, you would need to have a multiple camera setup. It is possible to have such a setup when using GPU Instancer; however there are a few points that need to be taken into consideration.

First of all, a GPUI manager works with information from the camera that is defined in it. Culling features such as frustum culling, occlusion culling and distance culling (and LOD detemination) are all based on the information from this camera. Therefore, if you have two cameras in your scene, and if you define one to a GPUI manager (or it auto-detects one of these cameras), it will apply these features based on information on this camera and not the other. By default, this would result in the second camera not showing all the visible instances (if any) since those instances are culled by information from the first camera.

In order to prevent this, the first reflex might be to turn off these culling features. While this would work, this is not a very efficient solution in cases where there is a lot of instances to be culled in the scene - since GPUI's culling features are a huge part of its rendering performance. No frustum or occlusion culling would mean unnecessary rendering by the GPU where the rendered instances are not visible by any camera.

Instead, the best practice in most cases while using a multiple camera setup is to use two managers that define the same prototypes. This will double the draw calls for GPUI prototypes, but it is in general a by far better performance solution than rendering unnecessary geometry by disabling the culling features. In order to do this, you can follow these steps:

- (1) Setup a manager with the desired prototypes in it for the first camera. This can be any GPUI manager you wish to use.

- (2) Disable the Auto Select Camera option on the manager and assign the first camera to the Use Camera field.

- (3) Enable the Use Selected Camera Only option on the manager.

- (4) Duplicate that manager in the Hierarchy window (you can simply select the manager and press

CTRL + D)

- (4) Duplicate that manager in the Hierarchy window (you can simply select the manager and press

- (5) Finally, assign the second camera to the Use Camera field of the second manager.

You can follow these steps and duplicate the manager for each additional camera you wish to use.

Please keep in mind, however, the Use Selected Camera option in the managers make them render only to their assigned cameras. Since the Unity Editor scene camera also is a camera, this means that your instances will not show in the Scene View during the play mode when you use this option.

Please also note that the Auto Add/Remove functionality and the Add/remove API methods will not work in this setup.

Using a No-GameObject Workflow where Possible

This is an advanced topic, and currently a no-game object workflow in GPUI is only available through the GPU Instancer API.

The idea behind a no-game object workflow is that even the bare-bones existence of a typical GameObject in your scene is effecting the performance. GameObjects are usually necessary for various reasons - be it you need to use colliders, or some scripts on your objects, or simply instantiate a prefab in your scenes. However, as much as GameObjects are optimized in Unity, not having them at all while still being able to render their meshes/materials would give you the best performance if all you need for them is to be seen in the camera.

GPUI makes this possible by allowing access to its core rendering system from its API. There are 2 main API methods that can be used for no-GameObject workflow.

First one is Initializewithmatrix4x4array, which mainly allocates the required memory in the GPU and sets the data from the given Matrix4x4 array. This is enough to start the rendering process for the given objects.

Second one is Updatevisibilitybufferwithmatrix4x4array, which updates the GPU memory with the given Matrix4x4 array. This is used to update the matrix data of the objects, when you want to move, rotate or scale the objects. It can also be used for add or remove operations if there was enough allocated memory during initialization.

The first method where the initialization happens works slower because it sets up GPU memory for the indirect instancing. The second one works much faster, because it only updates a portion of GPU memory.

For example if you want to Add/Remove Objects, the best way to do this is to start with a big enough array that can hold your maximum number of instances, and only reinitialize when required (e.g. when the allocated memory is not enough anymore or it is too big and you want to free up some GPU memory). The extra indexes you have on the array (which are not used) can be set to Matrix4x4.zero. These will be discarded by GPUI's compute shaders automatically and will not be processed for rendering.

Here is an example usage of this:

Using the Tree Manager with Multiple Terrains

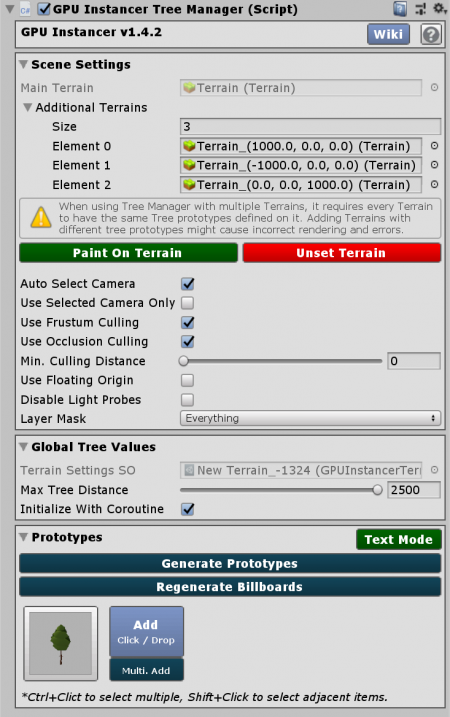

From GPUI version 1.4.2. on, when adding a Tree Manager to a scene with multiple Unity Terrains, GPUI automatically takes into account of the tree prototypes in these terrains and combines the ones with the same tree prototypes. This is designed to lower the amount of draw calls and GPU memory usage, resulting in better performance.

When adding your terrains at runtime or loading another scene with terrains additively, you can instead use the GPUInstancerTerrainRuntimeHandler component for the same effect. You can do this by adding a single Tree Manager to the (initial) scene, and adding GPUInstancerTerrainRuntimeHandler component to each of the terrains that will be added to the scene. That is, the Tree Manager will check the tree prototypes on terrain GameObjects that have this component and merge the terrains if they have the same exact tree prototypes on them automatically. (Please note that if the terrains that have this component have differing tree prototypes, this will result in an error.)

Additionally, if you need finer control over this, you can use the AddTerrainToManager and RemoveTerrainFromManager API methods.