GPU Instancer:Terminology

About | Features | Getting Started | Terminology | Best Practices | F.A.Q.

In this page, you can find information about the concepts and terminology that is used by GPU Instancer. GPUI works out of the box with almost no required setup, but if you are not familiar with the concepts that are utilized by GPUI, reading these sections will help you get the most performance gain out of using GPUI - by both finding the right settings, and creating the right setups for your scenes.

Contents

GPU Instancing

GPU instancing is a graphics programming technique that you can use to improve the performance of your games. It basically works by rendering the objects that share the same mesh and material multiple times - while sending them to the GPU in as little draw calls as possible. GPU instancing, therefore, is very helpful in cases where you want to render the same object many times in your scene. Such objects can be anything from buildings to asteroids; they can be grass or trees - or even billboards and impostors. GPU instancing is a relatively new technique that can be used as an alternative to the traditional batching techniques; and it is usually supported by all the modern graphics devices that come with a dedicated GPU.

On this point, please note that the power of the GPU becomes very important when determining how much performance gain one can get from GPU instancing. Basically, it scales for the better with a more powerful GPU; and on some devices (such as the integrated graphics units offered by e.g. Intel) the performance gain might not be very impressive.

Batching

Batching in general is the process of grouping together various mesh/material data to send to the GPU for rendering in every frame. Without any batching, to render a mesh with a given material on the screen, all the meshes are sent one by one to the GPU and rendered with their corresponding materials/shaders. Thus, each (visible) mesh in the scene issues a draw call without batching. This quickly becomes inefficient given the GPU is much faster than the CPU. Also, since issuing a draw call itself takes CPU time, without any batching the GPU would be waiting for the CPU most of the time, and those waiting times would result in a lower FPS.

Unity, being a good game engine, offers two major methods of draw call batching: Dynamic Batching and Static Batching. Both of these techniques work mainly on the CPU.

In Dynamic Batching, vertices of the meshes that share the same material are grouped together in the CPU and sent to the GPU in a single draw call. The main limitation in Dynamic Batching (as of this writing) is 900 vertex attributes. So if you have a mesh with vertex, normal and UV information (for textures), Unity can batch together every 300 vertex in a single draw call. There are even further limitations on this: the shader on the materials must use a single pass, the objects must be in the same transform scale, they should not receive real-time shadows, etc.

Static Batching imposes less limitations, and the amount of vertices that can be grouped together is a lot more; you are limited to 64k vertices per batch (but less on mobile platforms). However, the catch with static batching is that no kind of movement is allowed, and thus objects must be marked static beforehand in order to be statically batched. One further problem is that Static Batching uses a lot of memory when the object counts are high, although it doesn't use as much CPU power as Dynamic Batching. For example, marking trees as static in a scene with a dense forest can have serious impact on the CPU memory.

Furthermore, since both of these batching techniques are based on the CPU, they share the power of the CPU with other running game scripts.

Instancing

GPU instancing (also known as Geometry instancing) is an alternative solution to batching that lightens the load on the CPU and the CPU memory by taking advantage of the power of modern GPUs. It basically works by sending the same mesh/material combinations to the GPU in as little draw calls as possible and informing the GPU to render them multiple times. This removes the bottleneck that occurs when sending mesh and material data from CPU to the GPU, and results in higher fps if there are many of the same mesh-material combinations.

There are various advantages of using GPU instancing over draw call batching. Firstly, there are almost no operations done in the CPU since there is no mesh combining process before sending the instance data to the GPU. This frees up either CPU power or CPU memory depending on the batching method it is compared to. Second, the limitations are a lot less since there are no vertex attribute limits and the instance transforms can be modified without breaking batching (instances can move, rotate, scale etc.).

However, it is also important to note that instancing becomes a better strategy as the instance counts go higher, since the advantages actually start to show when there are many objects and their draw calls are reduced. After all, if there is only one mesh in the scene, its draw call would be 1 whether it is instanced or not. If there are many objects with different meshes and/or materials, their draw calls would be 1 for each, again, whether they are instanced or not. In short, GPU instancing helps in scenarios where you have many instances of the same game object.

Instancing vs Indirect Instancing

Since Unity 5.4, you can use GPU instancing in Unity. The simplest way to do this is to enable the GPU instancing option in Unity Standard and Surface shaders. When you use this option, Unity will handle frustum culling and culling by baked occlusion maps automatically. The downside for this is that Unity will group together draw calls for every 500 instances in Direct3D and 125 instances in OpenGL ES 3, OpenGL Core, and Metal. The reason for this limitation is that Unity is aiming to support as many devices as possible, and not all devices are equal.

In order to use instancing in a more efficient way in modern devices, there are two scripting APIs that Unity provides: The DrawMeshInstanced API and the DrawMeshInstancedIndirect API. Both of these APIs allow you to use GPU instancing; and the main difference between them boils down to the amount of instances you can render in a single draw call. The major downside of using both of these APIs, however, is that Unity will no longer handle frustum and occlusion culling for the instances.

The DrawMeshInstanced API is the simpler of the two. It takes in the mesh and its transform data (positions, rotations, scales) for the instances and instructs the GPU to render the meshes with the given material and count. This method is limited to 1023 instances per draw call.

The DrawMeshInstancedIndirect API, on the other hand, takes in only the mesh and material and requires a GPU buffer to set the amount of instances to render. Transform data is also defined using a separate buffer. Although complex, this method potentially reduces the amount of draw calls to 1 even if there are hundreds or even hundreds of thousands of the same mesh/material combination. On the other hand, using GPU buffers as such requires a minimum of Shader Model 4.5 compatible graphics hardware, and if Compute Shaders are also used to manipulate these buffers, a Shader Model 5.0 is required.

In short, indirect GPU instancing is the most complex yet also the most effective method for GPU instancing, but it also requires modern hardware.

How does GPU Instancer Work

The aim of GPUI is to provide an easy to use interface to use indirect instancing without having to go through the learning curves of (or extensive development times of) GPU programming and Compute Shaders. To provide this, GPUI analyzes a prefab (or Unity terrain) and uses indirect instancing to render its instances (or detail/tree prototypes). Upon sending the mesh and material data to the GPU once, GPUI creates various GPU buffers and dispatches Compute Shaders on every frame to manipulate the instance data in these buffers. This approach takes the load completely off the CPU and uses the GPU for all rendering processes - so that the CPU threads work more efficiently for game scripts. GPUI also uses different optimization techniques to effectively work on different platforms; so that whether the target platform is a high-end PC, VR, or a modern mobile device, GPUI provides the best indirect instancing strategy.

In short, GPU Instancer further builds on the concept of indirect instancing by applying various culling and LOD operations directly in the GPU and rendering only the relevant instances on the screen. This is also why the frustum and occlusion culling systems included with GPU Instancer are designed to work with GPUI prototypes only. What they do is basically to reduce the number of objects drawn for each prototype that are defined on the GPUI Managers.

To exemplify, let's say you have 10.000 instances of a prefab. Let's at first keep the example simple and assume this is a simple prefab with only one mesh and one material. To render all the instances of this prefab, GPU Instancer makes only 1 draw call with the count 10.000. This count and the transform data for the instances are stored in GPU memory. When you use frustum and/or occlusion culling, this count is modified in the GPU, and based on the result of the culling calculations (that are also done in the GPU) the visible instances are rendered. So GPUI renders all 10.000 instances of this prefab in 1 draw call; and rendering time speeds up further because there are less objects that are actually rendered. If you want the instances of this prefab to cast shadows, another draw call is made for their shadows. Further optimization techniques are again applied in the GPU (to prevent shadow popping, etc.) and the resulting render takes 2 draw calls for these 10.000 prefab instances with their shadows.

In a more complicated prefab, the core idea is still the same. GPUI makes 1 draw call per each mesh/material combination found in the prefab. Meshes with multiple materials result in a draw call for each sub-mesh material. Also, each LOD in an LOD Group is treated as another mesh; meaning that each mesh/material combination in each LOD will take 1 draw call. In effect, this results in only 9 draw calls for the 50k asteroids in the PrefabInstancingDemo scene where there are 3 asteroid prefabs with 3 LODs each. Also note that the number of drawcalls in this scene would still be 9 whether the asteroid instance count was 10 or 100.000. That is why GPUI gives you better performance gain with the more instance counts there are.

On this point, please note that even though GPUI's operations in the GPU are vert fast, they will amount to using unnecessary GPU resources if the instance counts are not such that they provide a performance gain from GPU instancing as mentioned above. This means that you should not define every prefab instance in your scene as a GPUI prototype, but only use GPUI for the prefabs that have many instances in your scenes. Please take a look at the Best Practices Page for more information about this.

Compute Shaders

The GPU has a lot more processing power than the CPU. Traditionally, the GPU has been used only for rendering purposes, but modern hardware support programming the GPU for operations other than rendering (at least other than the normal rendering pipeline). These programs are called Compute Shaders, and they require minimum Shader Model 5.0. This requirement is satisfied by DirectX 11, OpenGL Core 4.3, Metal, Vulkan and OpenGL ES 3.1 graphics APIs.

By using Compute Shaders, it is possible to achieve faster rendering, and thus GPU Instancer makes extensive use of them. GPUI uses Compute Shaders mainly to manipulate instance data on the GPU, and for visibility calculations, buffer management, shadows, LODs, etc.

Frustum Culling

Without any culling, the graphics card would render everything in the scene; whether the rendered objects are actually visible or not. Since the more geometry the graphics card renders, the slower it will be rendering them - various techniques are used not to render the unnecessary geometry. "Culling" is an umbrella term that is used when some objects are decided not to be rendered based on a rule.

Frustum culling is a culling technique that checks the camera's frustum planes and culls the objects that do not fall into these planes. These objects are culled because they are not visible by the camera.

GPU Instancer does all the camera frustum testing and the required culling operations with compute shaders in the GPU before rendering the instances. Because of this, the culling operations are both faster and they do not take any time in the CPU allowing for more room to run game scripts in the CPU.

Please note that GPUI currently uses only the camera that is defined on a manager to do frustum culling. If the manager is auto-detecting the camera, this will be the first camera in the scene with the MainCamera tag. What this means is that frustum culling will currently work only for this camera. If your scene requires multiple cameras rendering at the same time, you can turn off the frustum culling feature from the manager. If you are using multiple cameras but not rendering with them at the same time, you can simply call SetCamera(camera) method from the GPUInstancerAPI after you switch your camera and GPUI will start handling all its operations on this new camera.

Occlusion Culling

In most games, the camera is dynamic - it captures the game world from different angles. So for example in one camera position, the grass behind a house can be visible - and yet in another one it may not. However, with only frustum culling, the grass will be rendered as long as it stays inside the camera frustum - even though it may actually be hidden behind the house. This happens because objects are drawn from farthest first to closest last in Unity (and 3D graphics in general). The closer ones are thus drawn on top of the farther ones. This is referred to as overdraw.

Occlusion Culling is a technique that targets this concern. It lightens the load on the GPU by not rendering the objects that are hidden behind other geometry (and therefore are not actually visible).

As opposed to frustum culling, occlusion culling does not happen automatically in Unity. For an overview of the Unity's default occlusion culling solution, you can take a look at this link:

https://docs.unity3d.com/Manual/OcclusionCulling.html

This solution has two major drawbacks: (a) that you need to prepare (bake) your scene's occlusion data before using it and (b) that you need your occluding geometry to be static to be able to do so. That effectively means that objects that move during playtime cannot be used for occlusion culling.

GPUI comes with a GPU based occlusion culling solution that aims to remove these limitations while increasing performance. The algorithm used for this is known as the Hierarchical Z-Buffer (Hi-Z) Occlusion Culling. In effect, GPUI allows for its defined prototypes' instances to be culled by any occluding geometry, and without baking any occlusion maps or preparing your scene.

Just like its frustum culling solution, GPUI does the required testing and culling operations with compute shaders in the GPU before rendering the instances. And again, because of this, the culling operations are both faster and they do not take any time in the CPU allowing for more room to run game scripts in the CPU.

However, please note that the Hi-Z occlusion culling solution introduces additional operations in the compute shaders. Although GPUI is optimized to handle these operations efficiently and fast, it would still create unnecessary overhang in scenes where the game world is setup such that there is no gain from occlusion culling. A good example of this would be top-down cameras where almost everything is always visible and there are no obvious occluding objects. For more information on this point, please check the occlusion culling section in the Best Practices Page.

Please also note that, just as with frustum culling, GPUI currently uses only the camera that is defined on a manager to do occlusion culling. If the manager is auto-detecting the camera, this will be the first camera in the scene with the MainCamera tag. What this means is that occlusion culling will currently work only for this camera. If your scene requires multiple cameras rendering at the same time, you can turn off the occlusion culling feature from the manager. If you are using multiple cameras but not rendering with them at the same time, you can simply call SetCamera(camera) method from the GPUInstancerAPI after you switch your camera and GPUI will start handling all its operations on this new camera.

Here is a video showcasing GPUI's occlusion culling feature in action:

How does Hi-Z Occlusion Culling work?

In occlusion culling (in general), the most important idea is to never cull visible objects. After this, the second-most important idea is to cull fast. GPUI's occlusion culling algorithm makes the camera generate a depth texture and uses this to make culling decisions in the compute shaders. Culling is decided based on the bounding boxes of the instances: GPUI compares the instance bounds with the depth texture with respect to both their sizes and their depths. If the object instance is completely occluded by a part of the texture that has a closer value, then it is culled.

GPUI implements this technique by adding a Command Buffer to the main camera, and dispatching its depth information to a Compute Shader. The Compute Shader then calculates visibility as mentioned above and relays the visible instances to be rendered. Notice that this method does not involve a readback in the CPU, so that all the culling operations can be executed in the GPU before rendering.

There are various advantages of this; the main ones being that it works out of the box without the need to bake occlusion maps - and you can use culling with dynamic geometry that you don't even have to define as occluders. Furthermore, it is extremely fast given all the operations are executed in the GPU. However, the culling accuracy is ultimately limited by the precision of the depth buffer. On this point, GPUI analyzes the depth texture (by its mip levels), and decides how accurate it can be without compromising performance and culling actually visible instances.

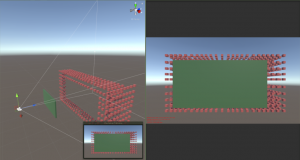

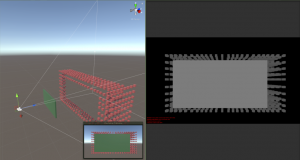

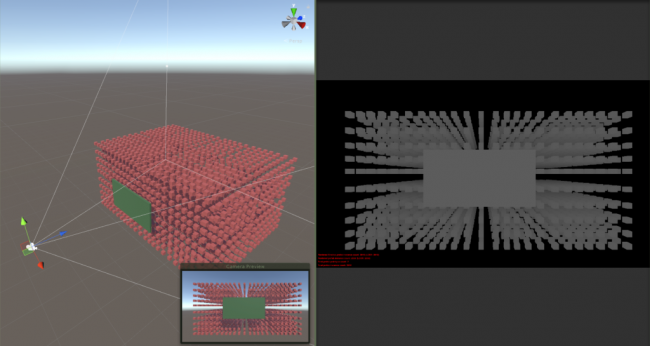

As you can see in the images below, the depth texture is a grayscale representation of the camera view where white is close to the camera and black is far away.

As the distance between the occluder and the instances become shorter with respect to the distance from the camera, their depth representations come closer to the same color:

In short, given the precision of the depth buffer, GPUI makes the best choice to cull instances for better performance - but also without any chance to cull any visible objects.

For further information on GPUI's Occlusion Culling system, you can take a look at this video:

Billboards

A billboard is a simple quad that always faces the camera. An atlas/impostor billboard (such as the ones generated with GPUI) also shows snapshots of an object from different angles depending on the camera viewing angle. GPUI's billboard generator is mainly designed for use with trees so it generates snapshots of view angles around the world y axis. This means that the billboards do not have view perspectives from up or down. For detailed information on GPUI's billboard generator, you can take a look at its section in the Features Page. Bilboards are a great way to increase performance while having vast viewing distances.