GPU Instancer:Terminology

About | Features | Getting Started | Terminology | Best Practices | F.A.Q.

In this page, you can find information about the concepts and terminology that is used by GPU Instancer. GPUI works out of the box with almost no required setup, but if you are not familiar with the concepts that are utilized by GPUI, reading these sections will help you get the most performance out of using GPUI by finding the right settings for your scenes.

Contents

GPU Instancing

Compute Shaders

Frustum Culling

Without any culling, the graphics card would render everything in the scene; whether the rendered objects are actually visible or not. Since the more geometry the graphics card renders, the slower it will be rendering them - various techniques are used not to render the unnecessary geometry. "Culling" is an umbrella term that is used when some objects are decided not to be rendered based on a rule.

Frustum culling is a culling technique that checks the camera's frustum planes and culls the objects that do not fall into these planes. These objects are culled because they are not visible by the camera.

GPU Instancer does all the camera frustum testing and the required culling operations with compute shaders in the GPU before rendering the instances. Because of this, the culling operations are both faster and they do not take any time in the CPU allowing for more room to game scripts in the CPU.

Please note that GPUI currently uses only the camera that is defined on a manager to do frustum culling. If the manager is auto-detecting the camera, this will be the first camera in the scene with the MainCamera tag. What this means is that frustum culling will currently work only for this camera. If your scene requires multiple cameras rendering at the same time, you can turn off the frustum culling feature from the manager. If you are using multiple cameras but not rendering with them at the same time, you can simply call SetCamera(camera) method from the GPUInstancerAPI after you switch your camera and GPUI will start handling all its operations on this new camera.

Occlusion Culling

In most games, the camera is dynamic - it captures the game world from different angles. So for example in one camera position, the grass behind a house can be visible - and yet in another one it may not. However, with only frustum culling, the grass will be rendered as long as it stays inside the camera frustum - even though it may actually be hidden behind the house. This happens because objects are drawn from farthest first to closest last in Unity (and 3D graphics in general). The closer ones are thus drawn on top of the farther ones. This is referred to as overdraw.

Occlusion Culling is a technique that targets this concern. It lightens the load on the GPU by not rendering the objects that are hidden behind other geometry (and therefore are not actually visible).

As opposed to frustum culling, occlusion culling does not happen automatically in Unity. For an overview of the Unity's default occlusion culling solution, you can take a look at this link:

https://docs.unity3d.com/Manual/OcclusionCulling.html

This solution has two major drawbacks: (a) You need to prepare (bake) your scene's occlusion data before using it and (b) you need your occluding geometry to be static to be able to do so. That effectively means that objects that move during playtime cannot be used for occlusion culling.

GPUI comes with a GPU based occlusion culling solution that aims to remove these limitations while increasing performance. The algorithm used for this is known as the Hierarchical Z-Buffer (Hi-Z) Occlusion Culling. In effect, GPUI allows for its defined prototypes' instances to be culled by any occluding geometry, and without baking any occlusion maps or preparing your scene.

Just like its frustum culling solution, GPUI does the required testing and culling operations with compute shaders in the GPU before rendering the instances. And again, because of this, the culling operations are both faster and they do not take any time in the CPU allowing for more room to game scripts in the CPU.

However, please also note that the Hi-Z occlusion culling solution introduces additional operations in the compute shaders. Although GPUI is optimized to handle these operations efficiently and fast, it would still create unnecessary overhang in scenes where the game world is setup such that there is no gain from occlusion culling. A good example of this would be top-down cameras where almost everything is always visible and there are no obvious occluding objects. For more information on this point, please check the occlusion culling section in the Best Practices Page.

How does Hi-Z Occlusion Culling work?

In occlusion culling (in general), the most important idea is to never cull visible objects. After this, the second-most important idea is to cull fast. GPUI's culling algorithm makes the camera generate a depth texture and use this to make culling decisions in the compute shaders. There are various advantages of this (e.g. you don't need to bake occlusion maps, can use culling with dynamic occluders, etc.) and it is extremely fast since all the operations are executed in the GPU. However, the culling accuracy is ultimately limited by the precision of the depth buffer. On this point, GPUI analyzes the depth texture, and decides how accurate it can be without compromising performance and culling actually visible instances.

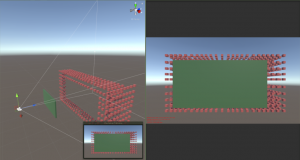

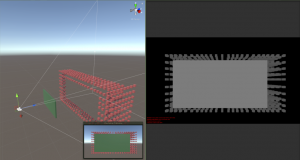

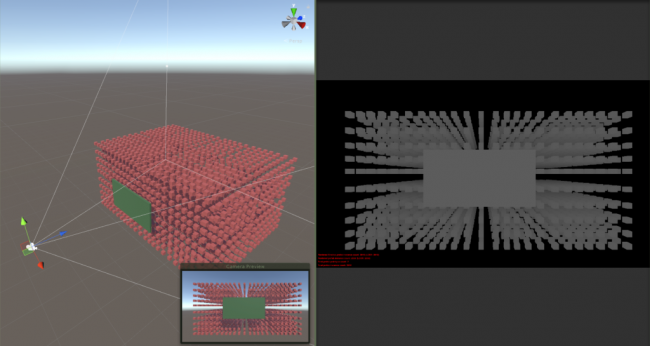

As you can see in the images below, the depth texture is a grayscale representation of the camera view where white is close to the camera and black is away.

As the distance between the occluder and the instances become shorter with respect to the distance from the camera, their depth representation become closer to the same color:

In short, given the precision of the depth buffer, GPUI makes the best choice to cull instances for better performance - but also without any chance to cull any visible objects.